Container orchestration with Kubernetes

What is a container?

Read about containerization before moving on to Kubernetes.

What is Kubernetes?

Kubernetes is an open-source container orchestrator that is designed to automate the deployment, scaling and operations associated with managing application containers in public and private cloud by providing the following features:

- Provisioning hosts

- Instantiating containers on a host

- Restarting failing containers

- Linking containers together to be able to communicate with each other

- Exposing containers as services outside of the cluster

- Scheduling and running containers on clusters of virtual machines

Architecture of a Kubernetes cluster

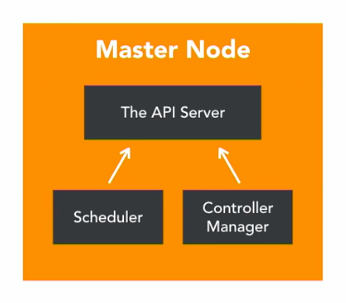

The Master Node is responsible for the overall management of the Kubernetes cluster and supports the communication, scheduling and control of containers in the cluster using the 3 components, the API Server, the Scheduler and the Controller Manager.

API Server

Enables interaction with the Kubernetes APIs and exposes various services for container management.

Scheduler

Watches pods that have been created but that does not have a node design yet and designs the pod to run in a specific node.

Controller Manager

Runs controllers using background threads to execute tasks in the cluster. Various roles take care of various tasks.

- The node controller is responsible for the worker states.

- The replication controller is responsible for maintaining the correct number of pods for the replicated controllers.

- The End point controller integrates pods and nodes.

- Service account and token controllers handle access management.

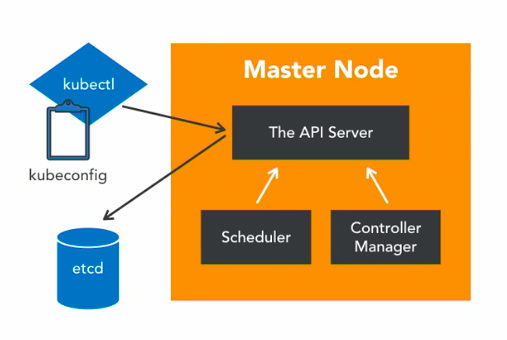

Etcd

Etcd is a distributed key value store used by Kubernetes to store it’s data for job scheduling, pod details, staging information, etc.

Kubectl

Kubectl is the command line interface used to interact with Kubernetes. It hosts configuration details or kubeconfig containing server and authentication details to access the API server.

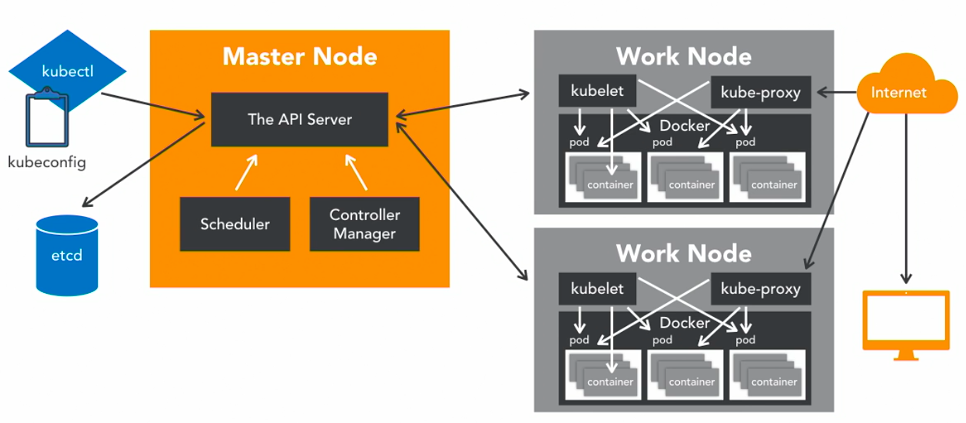

Worker Nodes

Worker nodes host applications and communicates with the Master node through a Kubelet process, an agent that communicates with the API server to verify if pods have been designed to the nodes and then executes the pod containers via the container engine as well as mounts and runs the pod volume and secrets. Worker nodes are aware of the pod and node states and health and reports this information to the Master as required.

Usually a container native platform such as Docker is installed on the worker node and interacts with kubelet to run the container on the node.

The Docker Daemon runs containers. Containers of an application are tightly coupled together in a pod. A pod is the smallest unit that can be scheduled as a deployment in Kubernetes. The group of containers in a pod can share storage, namespaces, IP addresses, etc and since they are co-located and share resources, they are always scheduled together. Worker nodes are exposed to the internet via load balancer.

Kube-Proxy

Kube-Proxy is a network proxy and load balancer for the service on a single worker node. It handles the network routing for TCP and UDP packets and performs connection forwarding. Traffic is routed to the pods from other resources that want to communicate with the pod.